OpenAI’s New Model, o3

Does it matter? Will I use it?

My goal with this newsletter is not to flood you with every headline from LLM‑land but to instead share changes that actually shift how I use LLMs in my own work–just the few stones that tilt the river’s course. This week, OpenAI rolled out a new model, o3, and I will now use it for some categories of tasks instead of 4o.

Those of you who’ve participated in You & AI know how much I value what Persistent Memory brings to the table, so I want to note right out of the gate that o3 makes use of Persistent Memory (unlike o1).

To figure out whether and when to switch between models, I asked ChatGPT to review my recent chats and tell me when each model would have been the smarter pick.

Three buckets emerged where o3 is the better model:

Long‑form work

Drafting or auditing anything beyond ~25 pages where you expect multiple revision passes—funding prospectuses, curriculum blueprints, policy white‑papers—belongs in o3.Deep structural thinking

Stress‑testing Rock Creek’s design principles or mapping cross‑disciplinary flows across our courses calls for o3’s extra inference depth.Image‑heavy tasks

Analyzing diagrams, interpreting handwriting, and explaining charts all benefit from o3’s ability to crop, zoom, and revisit image regions mid‑reasoning.I’ll note that while my handwriting is still beyond the grasp of an LLM, this video of the model turning a photograph of an intersection into coordinates is wildly impressive.

Everything else—daily emails, quick drafts, Q&A—stays in 4o.

Why not default to o3 if it’s ‘smarter’?

Tighter message caps – Because it’s more expensive for OpenAI, paid users will still face throttling on the number of messages.

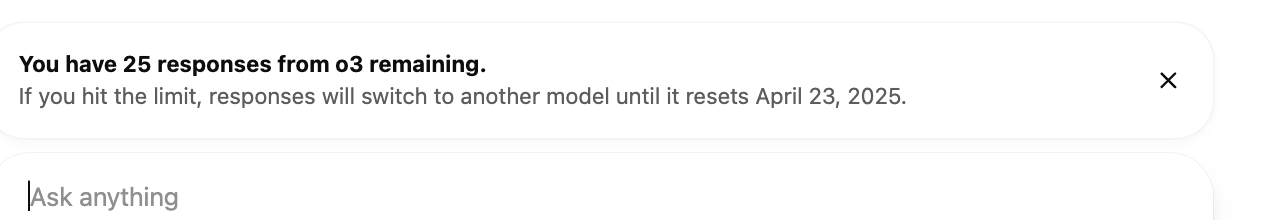

After experimenting at a leisurely pace over the course of an hour, I got the following message. Note, that isn’t 25 responses left in the day, but 25 left in the next eight days:

This means that, for now, you have to pick your spots, even if it would consistently be the better model for your work.

Slower turnaround – o3 spends more compute time on its chain‑of‑thought reasoning than 4o.

No voice – only 4o can “hear” and “speak” in real time.

No “voice” - It doesn’t feel like your chatty friend who gets you—the interface is brisker (which can also be welcome at times).

Bottom line

Stick with 4o as your default. Turn to o3 when the river widens, the water darkens, and the work asks you to feel the hidden shape of the bedrock beneath.

ChatGPT’s review of my recent chats & which model to use:

Tasks you handled that are already optimal in GPT‑4o

Stakeholder emails (Apr 13, Apr 14, Apr 15)

Short‑form, tone‑sensitive drafting where you iterate fast.

Newsletter‑style mimicry (Apr 10 upload of sample newsletters)

Requires stylistic adaptation, not heavyweight reasoning or giant context.

First‑pass reframes / brainstorming (e.g., renaming “Brainstorm” → “Use Cases We’re Watching,” Apr 15)

Idea‑surfacing benefits from rapid back‑and‑forth; o3’s extra latency would slow flow without material gain.

Quick Q&A on model capabilities (yesterday’s o3 vs 4o question)

Fact‑based answers plus lightweight reasoning; no need for 4× token pricing.

Mode‑switch prompts (“Efficiency Mode” bursts)

You deliberately keep these tight and velocity‑driven—perfect for 4o.

When turnaround time and stylistic nuance trump deep multi‑step inference, 4o is your workhorse.

Tasks you tackled that would have been stronger in o3:

Structural audits & coherence checks (Mar 26 pillars ↔ dualities discussion; Mar 29 cognitive agility framing)

You asked for hidden‑assumption flagging and structural‑tension calls. o3’s reasoning headroom would surface subtler inconsistencies.

Cross‑document integration (ongoing funding prospectus consolidation; Apr 15 inventory to ensure all brainstorm ideas mapped)

You’re stitching 80‑100 K+ tokens of prior drafts, frameworks, and notes—starting to push 4o’s 128 K window and risking chunking artifacts.

Cognitive‑progression mapping (Mar 3 English‑program cascade alignment with science‑of‑learning)

Multi‑layer dependency graphing benefits from o3’s larger context and chain‑of‑thought depth.

Governance‑model thought experiments (Mar 17 adaptive‐system governance; Mar 19 AI‑enhanced governance patterns)

Counterfactual stress‑tests and long‑horizon reasoning are precisely where o3’s extra inference steps pay off.

Image‑embedded analysis (planned use of student‑work screenshots or whiteboard diagrams—hasn’t happened yet, but will)

o3’s image‑inside‑reasoning loop is materially better than 4o’s caption‑style vision.

Pattern: Whenever the task demands (1) panoramic context across many docs, (2) deep logical consistency checks, or (3) integrated visual reasoning, escalate to o3.

Heuristic going forward

Is the context <120 K tokens, no images, and you care about turnaround? → stay in 4o.

Is the work a structural linchpin (framework lock‑in, prospectus master merge) or image‑heavy? → pay the o3 tax.